¶ Status Quo -- AI on 1 January 2026

As of: January 2026. All figures are based on publicly available sources.

¶ The Situation in Three Sentences

In January 2026, AI systems are improving faster than humans can follow. Large language models pass bar exams, diagnose diseases and write production-ready code. The threshold to self-optimisation has been crossed.

¶ What Happened in Twelve Months

Between the start of 2025 and January 2026, the AI landscape shifted fundamentally:

- OpenAI runs internal research teams in which AI agents autonomously review code, propose architectures and evaluate benchmarks [1].

- Anthropic demonstrates that Claude independently reads scientific papers, formulates hypotheses and designs experiments [2].

- Google DeepMind reports that Gemini contributed to the development of its own successor version -- measurably, not as a marketing claim [3].

The common denominator: AI writes code that builds better AI. It tests itself, corrects its own errors, optimises its own architecture.

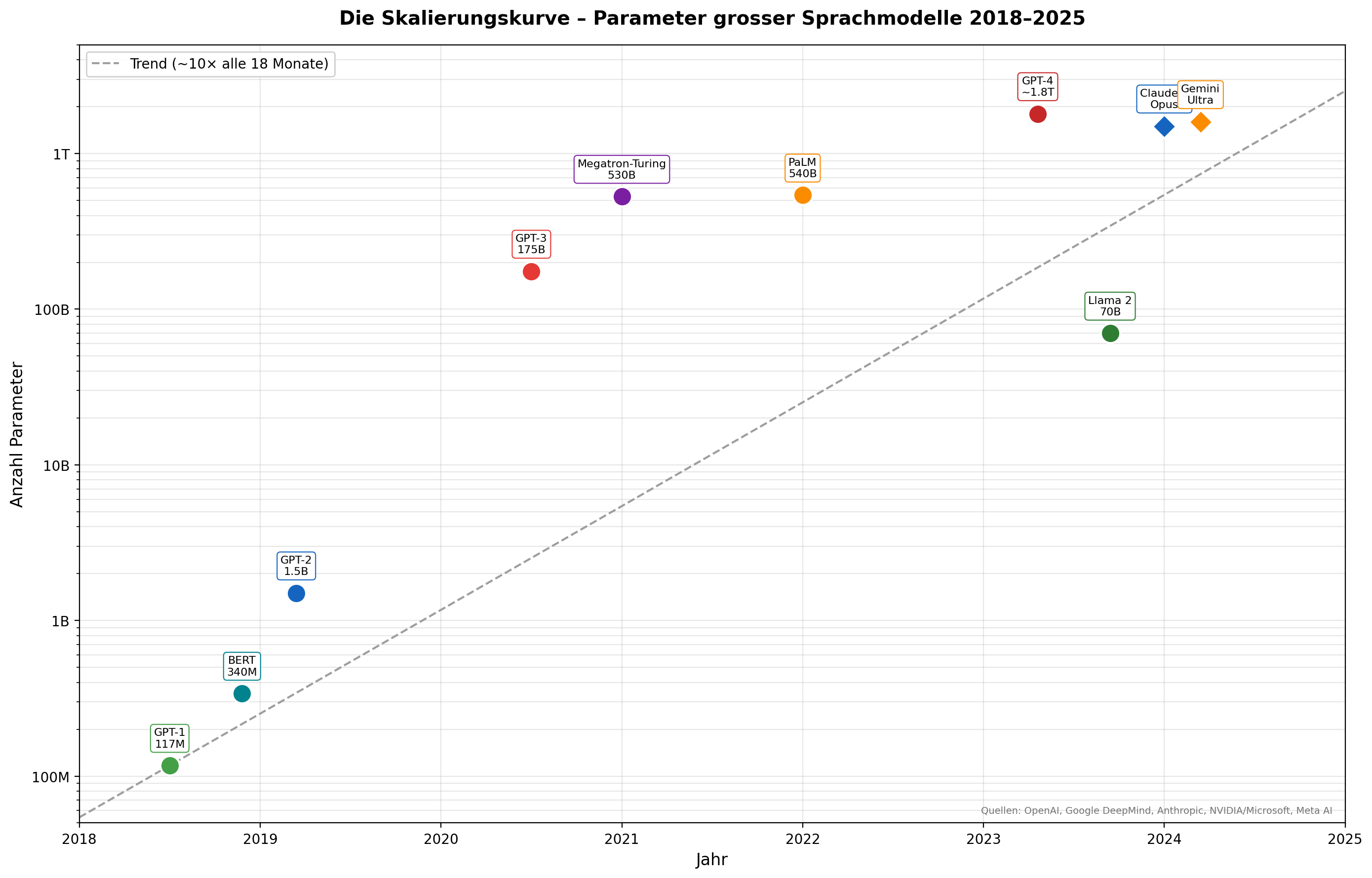

¶ Scaling Laws -- Bigger Means Better

In January 2020, Jared Kaplan and colleagues at OpenAI published a finding that became the central doctrine of the AI industry: the performance of neural language models improves predictably as a power law when three factors are increased -- parameters, training data and compute [4].

No new algorithms needed. Simply: bigger.

| Model | Year | Parameters |

|---|---|---|

| GPT-1 | 2018 | 117 million |

| GPT-2 | 2019 | 1.5 billion |

| GPT-3 | 2020 | 175 billion |

| GPT-4 | 2023 | ~1.8 trillion (estimated) |

| Claude 3 Opus | 2024 | not disclosed |

| Gemini Ultra | 2024 | not disclosed |

The parameter count roughly increases tenfold every 18 months [1] [3].

¶ The Practical Singularity

The mathematician Vernor Vinge coined the term "technological singularity" in 1993 -- that hypothetical point at which AI systems improve faster than humans can follow [5]. Ray Kurzweil estimated the date as 2045 [6]. He was off by twenty years -- in the wrong direction.

What occurred in early 2026 was not a science-fiction singularity. It was a practical singularity: AI overtook humans where it counts -- at work.

An example: Anthropic's Claude analyses a codebase of one million lines, identifies a subtle bug in the concurrency logic and delivers a correct, tested fix. In minutes. A human expert would need days [2].

¶ What This Means

AI development is no longer linear progress. It is a self-accelerating process. Each new model generation contributes to the development of the next. Humans remain involved -- but their role has shifted: from creator to overseer.

For Switzerland -- with its world-class research at ETH, EPFL and IDSIA -- this is simultaneously an enormous opportunity and an existential challenge.

¶ Bibliography

[1] OpenAI: GPT-4 Technical Report. arxiv.org, March 2023.

[2] Anthropic: Claude 3 Technical Report. anthropic.com, March 2024.

[3] Google DeepMind: Gemini -- A Family of Highly Capable Multimodal Models. December 2023.

[4] Kaplan, Jared et al.: Scaling Laws for Neural Language Models. OpenAI, January 2020.

[5] Vinge, Vernor: The Coming Technological Singularity. VISION-21 Symposium, NASA, 1993.

[6] Kurzweil, Ray: The Singularity Is Near: When Humans Transcend Biology. Viking, 2005.