¶ What AI Can Do Today

As of: January 2026. Overview of the capabilities of current AI systems.

¶ Large Language Models -- the Foundation

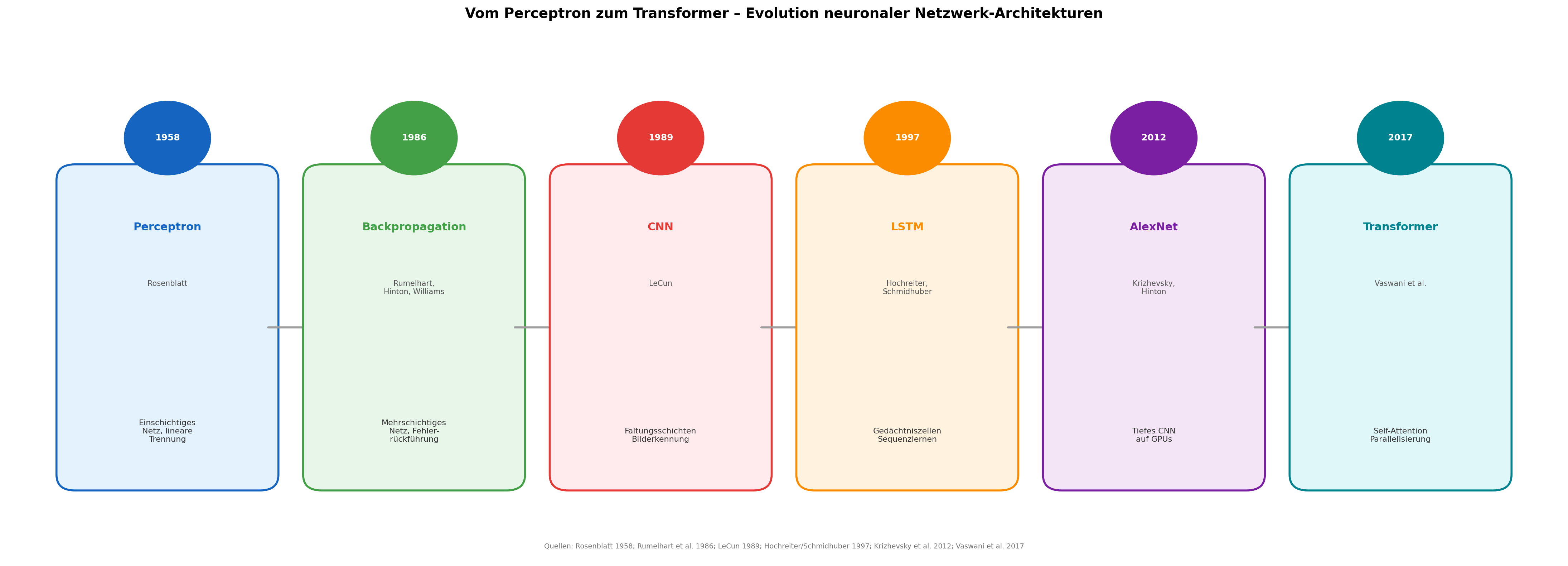

At the heart of the current AI revolution are large language models (LLMs). The principle: a neural network with hundreds of billions of parameters is trained on vast quantities of text -- books, websites, scientific papers, code. It learns the structure of language itself, without a specific objective [1].

The decisive architecture is called the Transformer, presented in 2017 in the paper "Attention Is All You Need" by Vaswani and seven other Google researchers [2]. The Transformer considers all words in a text simultaneously and calculates for each word how strongly it relates to every other word (Self-Attention). This makes it more precise and massively parallelisable -- perfect for modern GPU hardware.

¶ Emergent Capabilities -- What Nobody Programmed

The most astonishing aspect of large language models is that they develop capabilities that nobody explicitly programmed. Beyond a certain model size, new competences suddenly appear -- so-called emergent capabilities [3]:

- Logical reasoning: Multi-step inferences that were not contained in the training data

- Code generation: Writing functioning programme code in dozens of languages

- Mathematical proofs: Solving simple to intermediate mathematical problems

- Summarisation and analysis: Condensing and evaluating complex documents

- Multilingualism: Fluent translation between over 100 languages

These capabilities are not the result of targeted programming. They emerge as a side effect of sheer scale and data volume -- a phenomenon that surprised the AI research community itself [3].

¶ Multimodal Systems -- More Than Text

Since 2023, leading AI models process not only text but multiple modalities simultaneously:

| Modality | Example |

|---|---|

| Text | Essays, contracts, code, poetry |

| Images | Analyse a photo of a fridge, suggest a recipe |

| Audio | Speech recognition, simultaneous interpretation |

| Video | Describe scenes, summarise content |

GPT-4 was the first widely available multimodal model (March 2023). Google Gemini and Anthropic Claude followed with their own multimodal capabilities [4] [5] [6].

¶ Performance in Human Examinations

AI systems now pass examinations that are demanding for humans:

| Examination | GPT-4 Result | Human Average |

|---|---|---|

| US Bar Exam | Top 10% | 50th percentile |

| Medical Licensing (USMLE) | over 90% in all 3 parts | pass threshold at ~60% |

| Biology Olympiad | passed | -- |

| SAT Mathematics | 700/800 | 528/800 |

GPT-3.5 had scored among the bottom 10% on the bar exam. GPT-4, just six months later, ranked among the top 10%. The rate of improvement is unprecedented [4].

¶ Code Generation -- the End of a Job Profile

In early 2026, Anthropic's Claude identifies bugs in complex programme code faster than experienced developers -- and delivers the fix [5]. Not occasionally, but systematically:

- Nested logic errors in distributed systems

- Race conditions in parallel architectures

- Subtle security vulnerabilities that human reviewers overlook

The role of the programmer is shifting: no longer the person who writes code, but the person who understands the problem and reviews the result.

¶ Adoption -- the Fastest in History

ChatGPT achieved an unprecedented rate of adoption after its release on 30 November 2022 [7]:

| Service | Time to 1 million users |

|---|---|

| Netflix | 3.5 years |

| 10 months | |

| Spotify | 5 months |

| 2.5 months | |

| ChatGPT | 5 days |

Within two months, 100 million people were using ChatGPT. The Swiss bank UBS called it the fastest adoption of a consumer application in internet history [7].

¶ What AI Cannot Do

Despite all progress, current AI systems have clear limitations:

- Hallucinations: AI occasionally invents facts with the same confidence as correct answers

- No real-time world knowledge: Knowledge is limited to the training period

- No consciousness: AI simulates understanding but possesses no consciousness and no intentions

- No physical interaction: Purely digital capabilities, no physical dexterity

The boundary between what AI can and cannot do is, however, shifting faster than any forecast predicted.

¶ Bibliography

[1] OpenAI: GPT-4 Technical Report. arxiv.org, March 2023.

[2] Vaswani, Ashish et al.: Attention Is All You Need. NeurIPS, 2017.

[3] Wei, Jason et al.: Emergent Abilities of Large Language Models. Transactions on Machine Learning Research, 2022.

[4] OpenAI: GPT-4 Technical Report. arxiv.org, March 2023.

[5] Anthropic: Claude 3 Technical Report. anthropic.com, March 2024.

[6] Google DeepMind: Gemini -- A Family of Highly Capable Multimodal Models. December 2023.

[7] UBS Evidence Lab: ChatGPT -- The Fastest Growing Consumer Application in History. February 2023.